By far, the most troubling part about the massive AI takeover in the last few months is the lack of OpenAI transparency on the issue. Although OpenAI, the creators behind ChatGPT, have come out many times and talked about how transparent they are – actions suggest otherwise.

There is a history of bias in AI, and combined with a lack of congressional regulations, interference by any form of the federal government, and low criticism from the masses, the consequences will be devastating.

In this article, I will delve into OpenAI’s own statements about not disclosing certain information and what this means in terms of consequences for the world.

Open AI’s March 2023 Technical Report

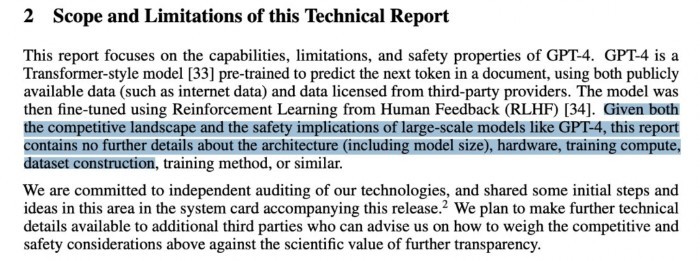

After their release of ChatGPT-4, OpenAI published a technical report that aimed to cover a bit about how they go about developing their AI. However, in the statement they almost immediately let us know that they are withholding information about what is obviously the most important aspect.

This includes details on the model’s architecture, hardware, and training methods:

One of the biggest criticisms that OpenAI has faced in the last few months has been its obvious bias against people of color and women. Essentially, it’s clear that AI is trained on “white male” data, which makes it implicitly biased, regardless of what OpenAI claims.

How can a model like that accurately portray the experiences and emotions of people of color, women, LGBTQ+ individuals, and immigrants? It cannot. And this lack of cultural sensitivity is becoming increasingly problematic when AI-generated content is used to discuss issues deeply tied to these cultural identities and experiences.

In the same way I don’t want to read about the trans-black experience in America written by a white man, I wouldn’t want to read it from an AI trained on datasets of white men, without empathy and emotional intelligence to even understand what it is actually writing about.

The Problem With No OpenAi Transparency

It is deeply problematic that OpenAI won’t share its training methods and architecture for a variety of reasons, but it all boils down to the fact that there is barely any regulation on the topic.

AI has, over the years, invaded people’s privacy, taken over content creation, and stripped away jobs left and right in all sorts of sectors. Yet it doesn’t look like any form of regulations or laws is being put in place to control the spread and development of Artificial Intelligence.

So just to make it explicitly clear:

- OpenAI says publicly that they are withholding information

- No laws or regulations make this illegal

- ChatGPT is already biased – but facing no consequences for it

- No one seems to care or do anything about it at all

One of the reasons I feel incredibly annoyed about this whole statement is that OpenAI will go on social media and do interviews and talk about how transparent they are, but they cannot emit the most important factors of their technology in regard to bias and growth and still say that they’re “doing their best on transparency”.

Lack of Regulation Might Just Be The Real Issue

It’s not just up to OpenAI to ensure the safety of Artificial Intelligence development moving forward. I can honestly understand why they are doing what they are doing. By releasing more data they are telling their competitors how to do it as well, which might hurt their pockets. Corporations hate having their pockets hurt.

OpenAI Transparency would hurt OpenAI.

The problem is largely due to the fact that there is no congressional regulation stopping the development of a technology we know nothing about. If technology is developed without anything hindering it, and the companies developing this technology are left unregulated with no consequences, we can almost certainly expect to see potentially democracy-destroying results.

Put a Halt to the Madness

We have to start checking in on the developers and our governments when it comes to this unregulated technology. Artificial Intelligence has the potential to cause serious harm us in the future, and even bigger pro-AI billionaires such as Elon Musk (who was actually one of the founders of OpenAI and then later dropped out) and Bill Gates continuously warn against unregulated AI.

Unfortunately, there isn’t a lot of opportunities for us normal people to half the process of OpenAI and related corporation. All we can do is ensure the content that we consume is devoid of the bias that ChatGPT has, and the only way to do that is to consume human-made content only.

I absolutely despise the fact that a majority of content produced right now is produced with the assistance of AI language models, and vow to do everything I can to at least stop this. In my opinion, I believe that all forms of media that use AI should, by law, be required to put a disclaimer on their content that clearly states AI was used in the creation of that content.

However, it doesn’t seem like that is going to happen any time soon. So as an alternative, I have created a way for content creators to easily state that their content is 100% human-made by putting any of our free No-Ai badges on their page.

The icon then links to this statement, and their content is quality checked by us to ensure that no AI was part of the process.

Stand up to regurgitated and dead content. Say No to AI-created content.

THIS ARTICLE WAS WRITTEN WITHOUT THE ASSISTANCE OF ARTIFICIAL INTELLIGENCE.