It seems every single week a new story about the detrimental consequences of AI hits most major news outlets, yet it is continuously swept under a rug of ignorance and apathy. The latest in a string of stories about detecting AI and the Artificial Intelligence takeover, is the tale of German photographer Boris Eldagsen’s entry to the Sony World Photography Award.

As a sociological experiment, the German photographer submitted an AI-generated photograph to the jury – which ended up winning the competition. As soon as it was clear that he won, Eldagsen let the world know the photo was AI-generated, turned down the prize, and asked for an open discussion on the topic.

A discussion that was quickly rejected.

It is important to discuss how we determine what is real and what is not, as this topic pertains to the fundamental nature of humanity. Although Eldagsen disclosed his use of AI for the sake of discussion, it raises the question of whether others may not be truthful about their use of AI.

This article will talk about the challenges and resulting problems that arise from using AI in content creation. Using examples of harmful AI in just recent months, I’m going to explore if there really is a solution. How do we know what is real and what is not?

Detecting AI: An Impossible Task?

A recent survey by Tooltester discovered that a majority of Americans are unable to differentiate between AI-generated and human writing. The more sophisticated AI becomes, the more difficult it is for even people like me to spot it, and as a result: corporations can continuously mass produce content incredibly cheaply.

If you don’t find this disturbing, I urge you to check out the rest of my blog to learn about the dire consequences and dangers of letting AI take over content creation online today. It is going to not just harm our mental health, our psyche, and our children – but it could potentially tear down our very democracy.

More and more tales of AI fooling innocent consumers are hitting the internet, and stories like Eldagsen’s photo entry are just another tale in the sea of deception. Sony claims they do not want to have an open discussion about AI in competitions like this because Eldagsen was “openly fooling them” – which Eldagsen, for the record, has denied.

Yet this is a conversation that needs to be had.

We are entering a whole new world of cheating, plagiarism, and fake news as a result of AI – and there is currently nothing in its way to stop it. No government regulation, no congressional ruling, and no real software that can properly counter it in fields of research and education.

The Field of Education & Their Failed Attempts at a Solution

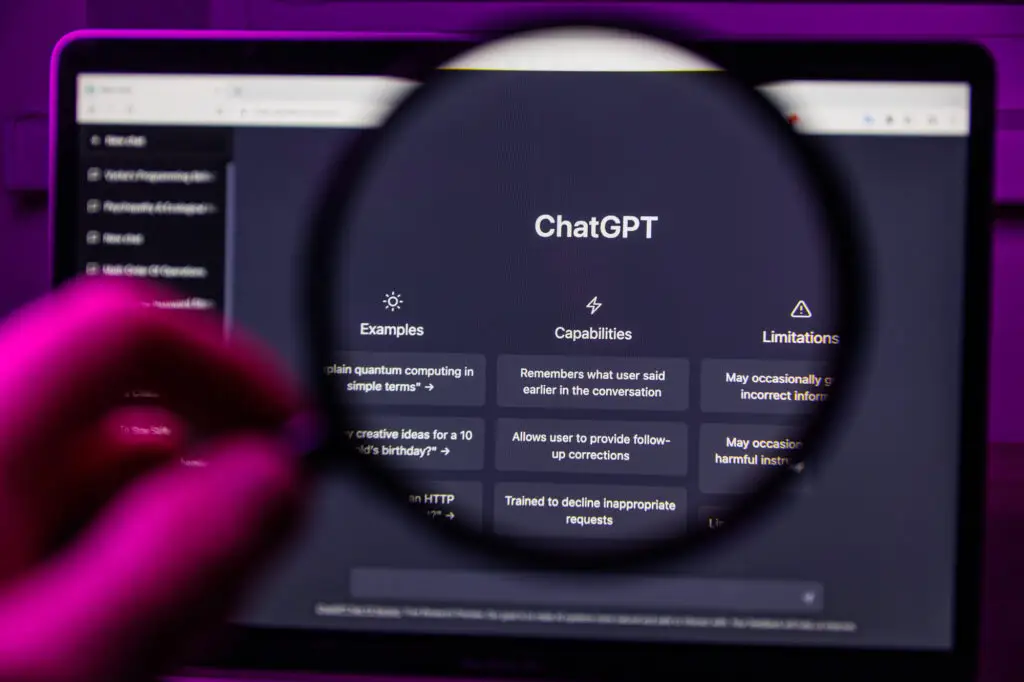

As you may have already heard, High Schools, Colleges, and even Ivy League Universities across the US and the world are being plagued by the introduction of ChatGPT.

Whereas back in our day (or literally just a few months ago) students would have to actually do their readings, learn everything about a topic, and then write original essays – they can now simply prompt ChatGPT and write 10-page essays in just a few minutes.

As a result, Universities invested heavily in programs that can detect ChatGPT. But the reality is that it is almost impossible to do so. Stories of honest students unjustly being accused of relying on AI are overshadowing the stories of those who get caught, and not for no reason.

As an example, OpenAI’s very OWN ChatGPT detector literally flagged Shakespeare’s Macbeth as being AI-generated. I’m going to assume that Macbeth was in fact not written by AI, as ChatGPT was released in 2022 – and Macbeth’s debut was in 1623.

Another AI detector, CPTZero, flagged the US Constitution as being entirely AI-generated as well. Considering the founding fathers barely had a concept of what electricity was, I highly doubt that they used ChatGPT to write the Constitution.

The Numbers Are Clear: Consumers Do Not Trust AI

The reality is that ChatGPT is so young there is no true counter to it and therefore no surefire way of ensuring that the content you’re consuming is actually human-generated.

This is going to be a bigger and bigger issue moving forward, which is why people like Max Tegmark are attempting to halt the development of AI so that the world can catch up, and why we need to start putting a larger focus on AI regulation, not generation.

Going back to the survey from earlier, Tooltester also asked the focus group about their trust in AI, and wouldn’t you know it, 71% of consumers would trust a brand less if they knew AI was involved.

Personally, not only do I trust a brand less if it relies on AI-generated content, I don’t trust it at all. AI-generated content is proven to be filled with misinformation, spread fake news, and consistent inaccuracies.

Lots of brands are already going full force on AI generation; Buzzfeed laid of 12% of their entire workforce, mostly focusing on content creators, to replace them with AI. For the record, this was done only a couple of weeks after ChatGPT was released.

What Is The Solution?

As I am sure you can gauge from the whole “no AI” thing on this website, I am clearly very, very against the rise of Artificial Intelligence in content creation. Not only do I find it annoying when trying to consume content, I fear detrimental long-term effects for both our current and future generations.

The fact that massive corporations can get away with mass-producing regurgitated content made by a dead robot is a crime, and until there are any government regulations cracking down on AI development, it is up to us to ensure we navigate this new world as carefully as possible.

The main issue as of right now is that yes, 70% of consumers would trust a brand less if they knew it was AI-generated (and they should, because AI is less trustworthy). But, as stories like our German photographer’s prize-winning AI photo shows, we simply aren’t capable of differentiating between the real and the fake.

Places like Buzzfeed and other major and minor content creators should be required to disclose their use of AI in their production.

However, it doesn’t look like this is happening any time soon, so as an alternative, I have created a way for content creators to easily state that their content is 100% human-made by putting any of our free No-Ai badges on their page.

The icon then links to this statement, and their content is quality checked by us to ensure that no AI was part of the process (and trust me, I am better than GPTZero).

We might not be able to force content creators to disclose their use of AI, but any honest blogger or artist will this way at least have the opportunity to prove that their content is real. That it contains human experiences, perspectives, empathy and emotional intelligence.

Stand up to AI today.

THIS ARTICLE WAS WRITTEN WITHOUT THE ASSISTANCE OF ARTIFICIAL INTELLIGENCE.